The phrase “human-in-the-loop” has become the default reassurance whenever AI oversight comes under scrutiny. It appears in regulatory filings, vendor pitch decks, and compliance documentation. It implies that a real person is watching, reviewing, and keeping automated systems in check.

The uncomfortable reality is simpler than the marketing suggests. In most implementations, “human-in-the-loop” describes someone rubber-stamping decisions they did not make, cannot understand, and lack the authority to override.

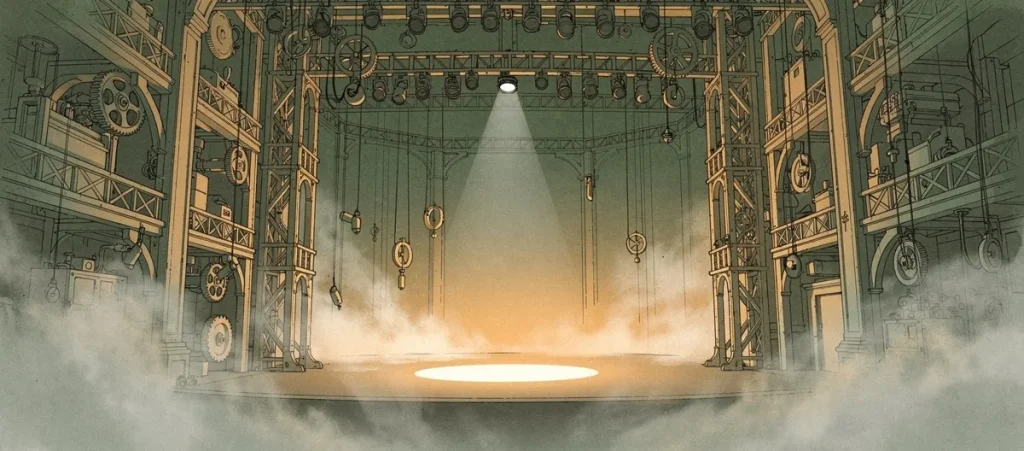

The core problem: Placing a person inside a system they cannot influence is not oversight. It is theater. And in candidate screening, theater has real consequences for real people.

This is not an abstract concern. The EEOC has made AI bias a top enforcement priority, and courts are already holding organizations liable for automated decisions that no human meaningfully reviewed. The question is no longer whether human oversight matters. The question is whether your version of it actually works.

The Illusion of Control

Consider a common scenario. A recruiter reviews candidates flagged by an AI screening tool. The system has already decided which candidates appear on the recruiter’s screen, which ones were filtered out, and what information accompanies each profile. The recruiter sees a curated highlight reel. They do not see the full game.

This is how limited human control operates in practice. The algorithm determines the thresholds, the filtering criteria, and the presentation layer. The human reviewer’s job narrows to a binary choice: approve or reject what the system has already pre-selected. That is validation, not evaluation.

The guidelines these reviewers follow are typically rigid, written by engineers and product teams the reviewer will never meet. Reviewers cannot question the system’s logic. They cannot advocate for design changes. They inherit the moral weight of outcomes without possessing any of the levers that shape those outcomes.

This dynamic creates a specific, measurable failure. When a reviewer can only see what the algorithm surfaces, they cannot catch what the algorithm misses. They become a passenger in a vehicle someone else is driving, pressing a button labeled “confirm” at programmed intervals. That is not oversight. It is decoration.

The Scale Problem No One Talks About

Even when organizations genuinely intend to provide human oversight, the math works against them. Automated screening systems process thousands of applications per day. Each human reviewer gets seconds per decision before the next one appears. At that pace, critical thinking is the first casualty.

of companies allow AI to reject candidates with no human review

maintain full human oversight on all AI rejection decisions

of automated screenings occur outside business hours

Sources: Articsledge AI in Hiring 2026, Humanly AI Recruiter Playbook

When courts see applications rejected within minutes or during off-hours, they treat that as evidence that no human actually reviewed the decision. Speed of rejection is becoming a liability signal. If your “human oversight” operates at machine tempo, regulators and judges notice.

The result is predictable. Reviewers develop their own algorithmic shortcuts just to survive the volume. Pattern recognition replaces critical analysis. They become extensions of the machine rather than checks against it. The role of the recruiter collapses from evaluator to click-through validator.

Accountability That Dissolves on Contact

Here is where the pattern gets dangerous. When an AI-driven screening decision produces a discriminatory outcome, organizations point to their “human-in-the-loop” process as proof of due diligence. The reviewer becomes a shield. Their signature on the decision absorbs the liability while the actual architects of the system remain insulated.

The EEOC has been explicit: employers remain fully responsible for discriminatory outcomes when they use AI tools, even when those tools are designed or administered by a vendor. You cannot outsource accountability. And placing an underpowered reviewer between the algorithm and the outcome does not change who is responsible.

The Mobley v. Workday case demonstrated what happens when this structure fails. Courts ordered a vendor to produce its entire customer list, exposing every organization using that system to potential scrutiny. The accountability vacuum created by shallow human oversight does not protect anyone. It exposes everyone.

“Employers remain responsible for ensuring compliance with federal EEO laws when they use software, algorithms, or AI to make employment decisions, even when those tools are designed or administered by a vendor.”

Equal Employment Opportunity Commission, Federal AI Guidance

This creates a cascade of misplaced responsibility. The low-level reviewer drowning in thousands of cases absorbs the blame. The engineers who built the biased algorithm stay insulated in their departments. The executives who prioritized speed over safety remain invisible in the accountability chain. The result is scapegoats instead of safeguards.

Compliance Theater: The Regulatory Loophole

Regulations like GDPR and the EU AI Act (taking full effect August 2026) require human intervention in automated decisions that affect individuals. Organizations heard this and responded with the minimum viable compliance: low-paid contractors reviewing flagged cases with minimal training, rigid quotas that prioritize throughput over quality, and documentation that records “human review occurred” without measuring whether it was meaningful.

The result is ethical loopholes wide enough to drive entire fleets of biased algorithms through. The documentation says “human reviewed.” The reality is that human had 90 seconds to evaluate a decision shaped by millions of data points and proprietary algorithms they have never seen. That is not oversight. It is paperwork.

The 2026 regulatory landscape is tightening. NYC Local Law 144 requires bias audits on automated employment decision tools. Colorado, Illinois, and California have imposed specific compliance requirements. The EEOC has settled its first AI screening discrimination case and signaled more are coming. Organizations treating human-in-the-loop as a checkbox will discover that checkboxes do not hold up in court.

The pattern is not only a problem with internal oversight. It also shows up in how AI vendors sell their products. When a vendor answers hard questions by deferring to “the platform” or “the AI” rather than to a named person with real technical depth, they are exhibiting the same accountability vacuum on the sales side of the table. We wrote about a real example of this in why we almost chose Delve and went another way. The sniff test applies to the people selling you the system just as much as it applies to the people running it.

What Real Oversight Actually Requires

The critique of human-in-the-loop is not a critique of human involvement. It is a critique of human involvement done badly. Real oversight requires structural commitments that most implementations skip entirely.

Accountability theater

- Reviewers see pre-filtered outputs only

- AI produces scores, rankings, and pass/fail decisions

- No audit trail connecting evidence to outcomes

- Reviewer cannot override or question system logic

- Speed quotas override careful evaluation

Genuine accountability

- Reviewers see full transcripts, not scores

- AI provides evidence, humans draw conclusions

- Every evaluation is explainable and replayable

- Conversation and evaluation are structurally separate

- No visual or tonal analysis of candidates

The difference between these two columns is the difference between defensible processes and legal exposure. When a screening decision can be replayed, examined, and explained, it can be defended. When it cannot, it is a liability waiting for a plaintiff.

Transparency is not optional here. Opening the black box means publishing how evaluation criteria work, documenting what data informs each decision, and ensuring that every stakeholder (candidates, reviewers, compliance teams) can understand what happened and why. That is the foundation. Everything else is decoration.

The Separation Principle

One architectural decision changes everything: separating the AI that conducts the conversation from the AI that evaluates the responses. Most platforms combine these functions, which means the same system that asks the questions also decides what the answers mean. That conflation is where bias compounds silently.

When conversation and evaluation are structurally independent, the evaluation layer cannot be influenced by tone, appearance, camera presence, or any signal outside the content of the response itself. The output is a readable transcript, not a score. The reviewer receives evidence, not conclusions. And the final call remains entirely human.

This is the difference between AI on rails and AI running unsupervised. Bounded systems with clear separation of concerns produce outcomes that can be explained, audited, and defended. Monolithic systems that score candidates behind closed doors produce outcomes that can only be trusted, which is exactly the problem.

The trust deficit in AI screening exists because most systems ask for trust without earning it. They promise oversight they do not deliver. They claim transparency while operating as black boxes. They put a human “in the loop” who has no real authority, no real context, and no real power to change anything.

Where This Leaves Us

The term “human-in-the-loop” has become marketing language. It reassures without illuminating. It satisfies compliance without delivering accountability. And in a regulatory environment where the legal stakes are rising fast, it is becoming a liability rather than a protection.

Meaningful oversight is not about placing a person inside a system. It is about building systems where human judgment is structurally empowered, where every evaluation is explainable, where no automated decision is final, and where the people reviewing evidence have the authority, the context, and the time to actually use it.

That is not a feature to add later. It is a foundation to build on.